Free

Great for anyone to get started with our APIs.

- Build and Test on Groq

- Community Support

- Zero-data Retention Available

SPEED AND SCALE, FROM PROTOTYPE TO PRODUCTION

The AI inference platform built for developers. Fast responses, scalable performance, and costs you can plan for. Available in public, private, or co-cloud instances.

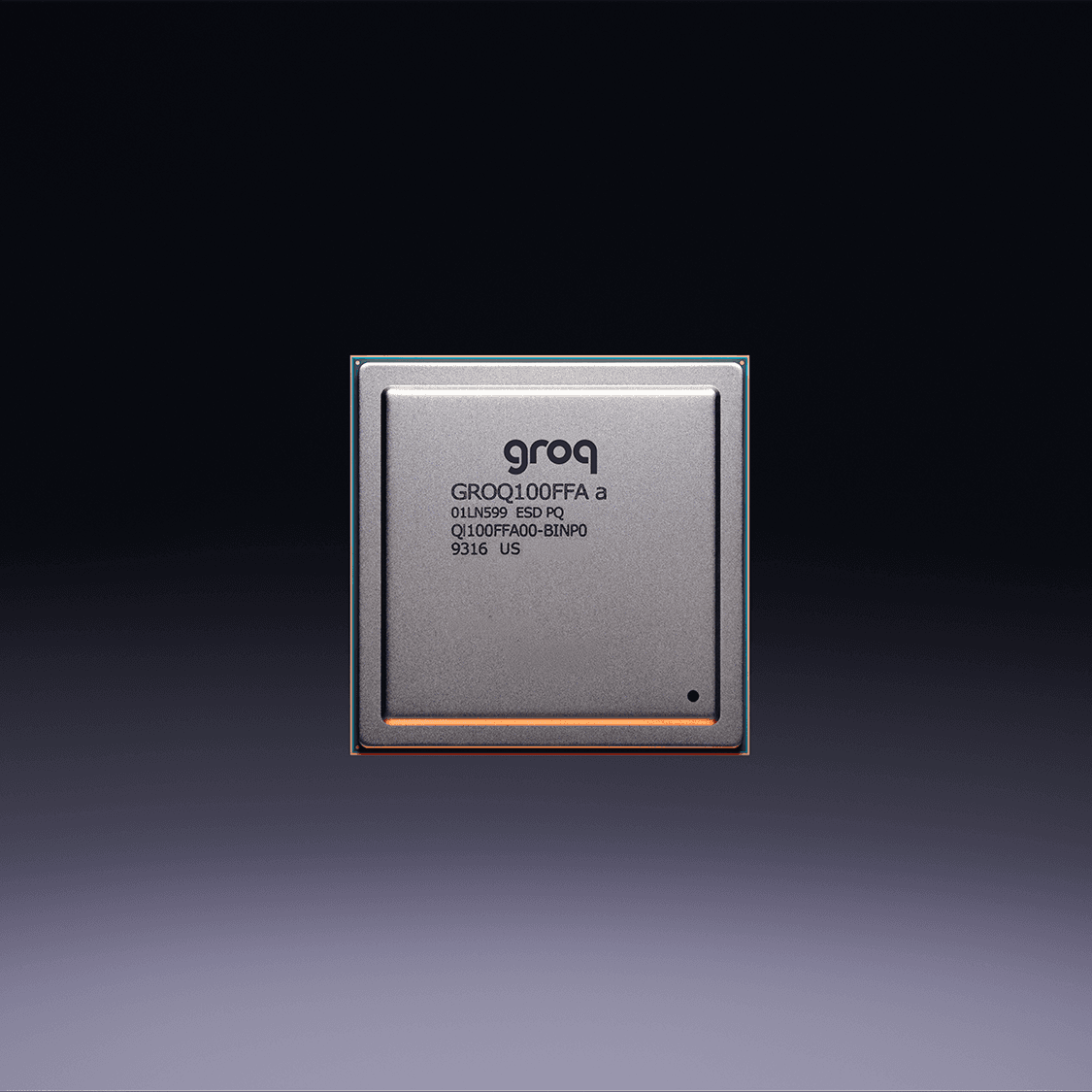

Built for speed and precision

Take advantage of fast AI inference performance, powered by our purpose-built LPU, for leading GenAI models across text, audio, and vision modalities.

Build now and scale as your needs grow

Great for anyone to get started with our APIs.

Great for developers and startups to scale up and pay as you go

Everything on the Starter Plan, plus:

Great for businesses who require custom solutions for large-scale needs

Everything on the Developer Plan, plus:

Lower latency means less compute time, no batching required. Record-setting performance. Usage-based.

Source: Artificial Analysis AI Adoption Survey 2025

Established in 2016 for inference, Groq is literally built different. It’s the only custom-built inference chip that fuels developers with the performance they need at a cost that doesn’t hold them back.

On-Prem Optionality

Available by request, the LPU powering GroqCloud can be deployed on-prem with GroqRack. Ideal for regulated industries or air-gapped environments. Seamless transition between cloud and local deployment.

Made to scale. Deployed globally.

Online now in four regions globally. Regional availability zones for minimal latency. Auto-scaling without overhead

Enterprise-grade data encryption. SOC 2, GDPR, HIPAA compliant. Optional private tenancy for sensitive workloads.